polya gamma augmentation

DongyueXie

2020-09-21

Last updated: 2020-10-27

Checks: 7 0

Knit directory: misc/

This reproducible R Markdown analysis was created with workflowr (version 1.6.2). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20191122) was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility.

The results in this page were generated with repository version 5a6cc2a. See the Past versions tab to see a history of the changes made to the R Markdown and HTML files.

Note that you need to be careful to ensure that all relevant files for the analysis have been committed to Git prior to generating the results (you can use wflow_publish or wflow_git_commit). workflowr only checks the R Markdown file, but you know if there are other scripts or data files that it depends on. Below is the status of the Git repository when the results were generated:

Ignored files:

Ignored: .Rhistory

Ignored: .Rproj.user/

Untracked files:

Untracked: analysis/bayeslogit.md

Untracked: analysis/polya_gamma.md

Untracked: code/kowal2019code/

Untracked: code/logistic.R

Unstaged changes:

Modified: analysis/test_typora.md

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the repository in which changes were made to the R Markdown (analysis/polya_gamma.Rmd) and HTML (docs/polya_gamma.html) files. If you’ve configured a remote Git repository (see ?wflow_git_remote), click on the hyperlinks in the table below to view the files as they were in that past version.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | 5a6cc2a | DongyueXie | 2020-10-27 | wflow_publish(c(“analysis/index.Rmd”, “analysis/kl.Rmd”, “analysis/polya_gamma.Rmd”, |

| html | 96caa31 | DongyueXie | 2020-09-28 | Build site. |

| Rmd | c23dea6 | DongyueXie | 2020-09-28 | wflow_publish(“analysis/polya_gamma.Rmd”) |

| html | eb32629 | DongyueXie | 2020-09-21 | Build site. |

| Rmd | 241207b | DongyueXie | 2020-09-21 | wflow_publish(c(“analysis/index.Rmd”, “analysis/polya_gamma.Rmd”, |

An important idea, both in understanding and computing discrete-data regressions, is a re-expression in terms of unobserved (latent) continuous data. – BDA

Bayesian logistic regression

The likelihood of \(y_i\) is \(L(y_i|\beta) = (\frac{\exp{x_i^T\beta}}{1+\exp{x_i^T\beta}})^{y_i}(\frac{1}{1+\exp{x_i^T\beta}})^{1-y_i}\).

For probit link function, we can introduce a latent variable,

\[\begin{array}{l} u_{i} \sim \mathrm{N}\left(X_{i} \beta, 1\right) \\ y_{i}=\left\{\begin{array}{ll} 1 & \text { if } u_{i}>0 \\ 0 & \text { if } u_{i}<0 \end{array}\right. \end{array}\]

For logit link function, we can replace normal distribution with \(u_{i} \sim \mathrm{logistic}\left(X_{i} \beta, 1\right)\).

(Using MH algorithm to draw posteriors is straightforward, but the logistic error introduces difficulty of other inferences like variational inference)

(what’s the advantage of data-augmentation over MH algorithm? faster converging? easier computation?)

Definition

X follows polya-gamma distribution with parameters \(b>0\) and \(c\in R\) if \[X\overset{D}{=}\frac{1}{2\pi^2}\sum_{k=1}^\infty\frac{g_k}{(k-1/2)^2+c^2/(4\pi^2)},\] where \(g_k\sim Gamma(b,1)\).

Binomial likelihoods parameterized by log odds can be represented as mixtures of Gaussians with respect to a P´olya-Gamma distribution.

Properties

\[\frac{\left(e^{\psi}\right)^{a}}{\left(1+e^{\psi}\right)^{b}}=2^{-b} e^{\kappa \psi} \int_{0}^{\infty} e^{-\omega \psi^{2} / 2} p(\omega) d \omega,\] where \(\kappa = a-b/2\), and \(\omega\sim PG(b,0)\).

The density of a Polya-Gamma random variable can be expressed as an alternating-sign sum of inverse-Gaussian densities \[f(x \mid b, c)=\left\{\cosh ^{b}(c / 2)\right\} \frac{2^{b-1}}{\Gamma(b)} \sum_{n=0}^{\infty}(-1)^{n} \frac{\Gamma(n+b)}{\Gamma(n+1)} \frac{(2 n+b)}{\sqrt{2 \pi x^{3}}} e^{-\frac{(2 n+b)^{2}}{8 x}-\frac{c^{2}}{2} x}\]

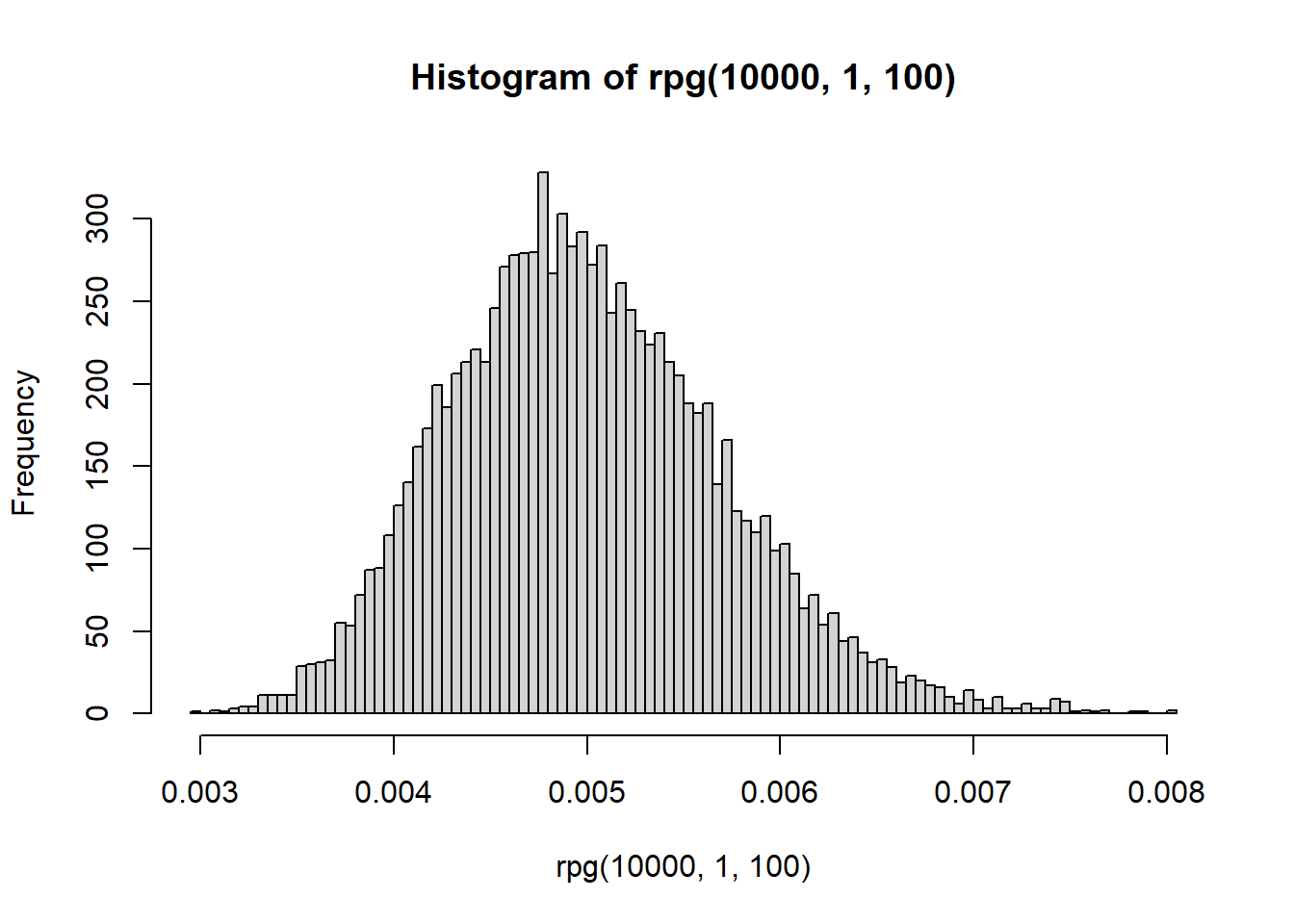

All finite moments of a Polya-Gamma random variable are available in closed form. In particular, the expectation may be calculated directly. This allows the Polya-Gamma scheme to be used in EM algorithms. If \(\omega\sim PG(b,c)\), then \(E(\omega) = \frac{b}{2c}tanh(c/2) = \frac{b}{2c}(\frac{e^c-1}{1+e^c})\). The variance can be found here

If \(w_1\sim PG(b_1,c)\) and \(w_2\sim PG(b_2,c)\) then \(w_1+w_2\sim PG(b_1+b_2,c)\)

Augmentation

Let \(y_i\sim Binomial(n_i,\frac{1}{1+e^{-\phi_i}})\), where \(\phi_i\) are log odds of success. In logistic regression, \(\phi_i = x_i^T\beta\).

THe likelihood contribution of observation \(i\) is

\[L_i(\phi_i) = \frac{(\exp\phi_i)^{y_i}}{(1+\exp(\phi_i))^{n_i}}.\]

In logistic regression, the likelihood is

\[\begin{aligned} L_{i}(\boldsymbol{\beta}) &=\frac{\left\{\exp \left(x_{i}^{T} \boldsymbol{\beta}\right)\right\}^{y_{i}}}{(1+\exp \left(x_{i}^{T} \boldsymbol{\beta}\right))^{n_i}} \\ & \propto \exp \left(\kappa_{i} x_{i}^{T} \boldsymbol{\beta}\right) \int_{0}^{\infty} \exp \left\{-\omega_{i}\left(x_{i}^{T} \boldsymbol{\beta}\right)^{2} / 2\right\} p\left(\omega_{i} \mid n_{i}, 0\right) \end{aligned},\]

where \(\kappa_i - y_i-n_i/2\).

The conditional posterior of \(\beta\) is \[p(\beta|w,y)\propto p(\beta)\exp\{-\frac{1}{2}(z-X\beta)^T\Omega(z-X\beta)\} = p(\beta)\exp\{-\frac{1}{2}(\beta-X^{-1}z)^TX^T\Omega X(\beta-X^{-1}z)\},\] where \(z = (\kappa_1/w_1,...,\kappa_n/w_n)\) and \(\Omega = diag(w_1,...,w_n)\).

Posterior

If the prior of \(\beta\) is Gaussian, then the conditional posterior of \(\beta\) is also Gaussian. So the Gibbs sampler iteratively samples from \((\omega_i|\beta)\sim PG(n_i,x_i^T\beta), (\beta|y,\Omega)\sim N(m,V)\).

Simulation

PG distribution

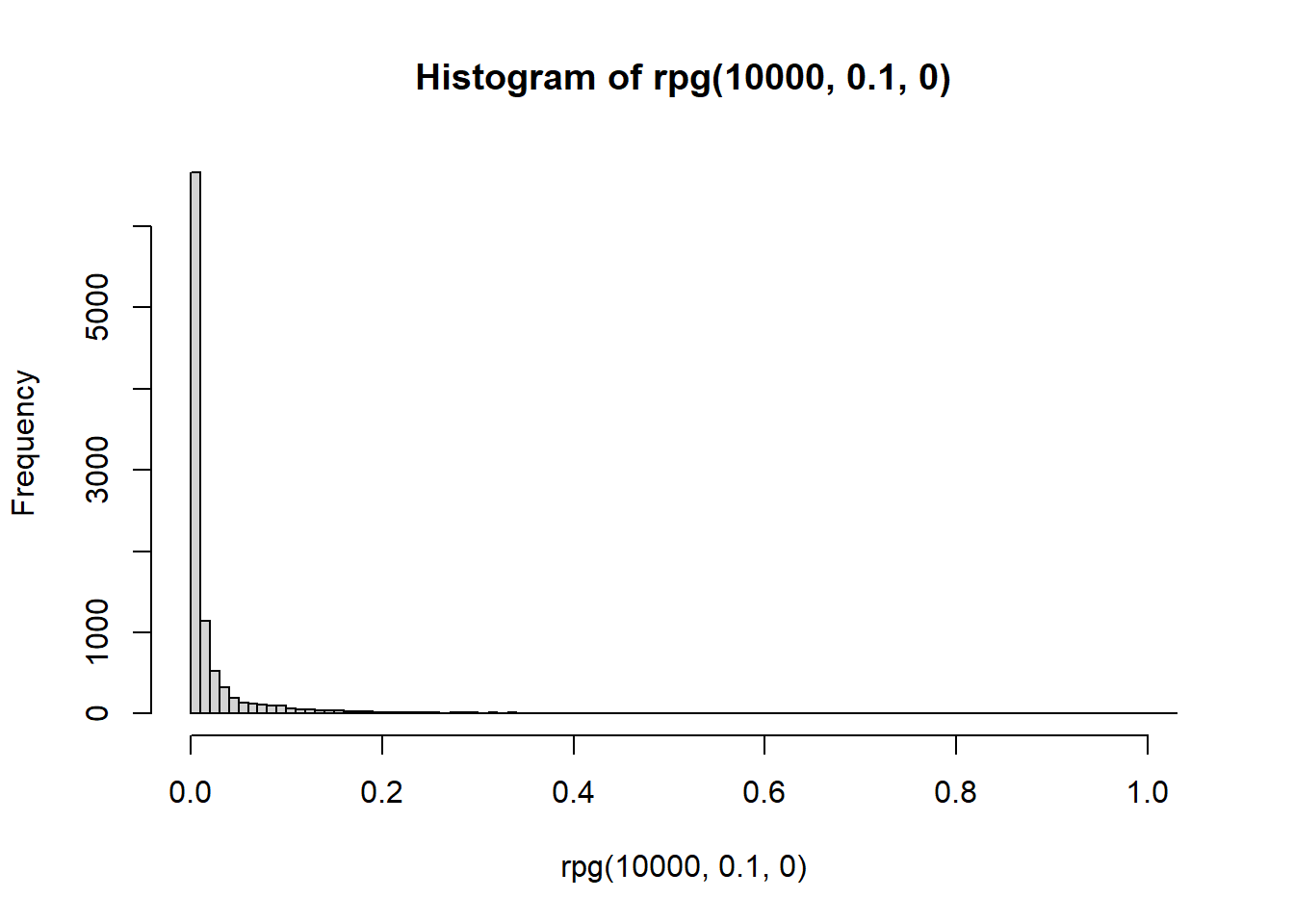

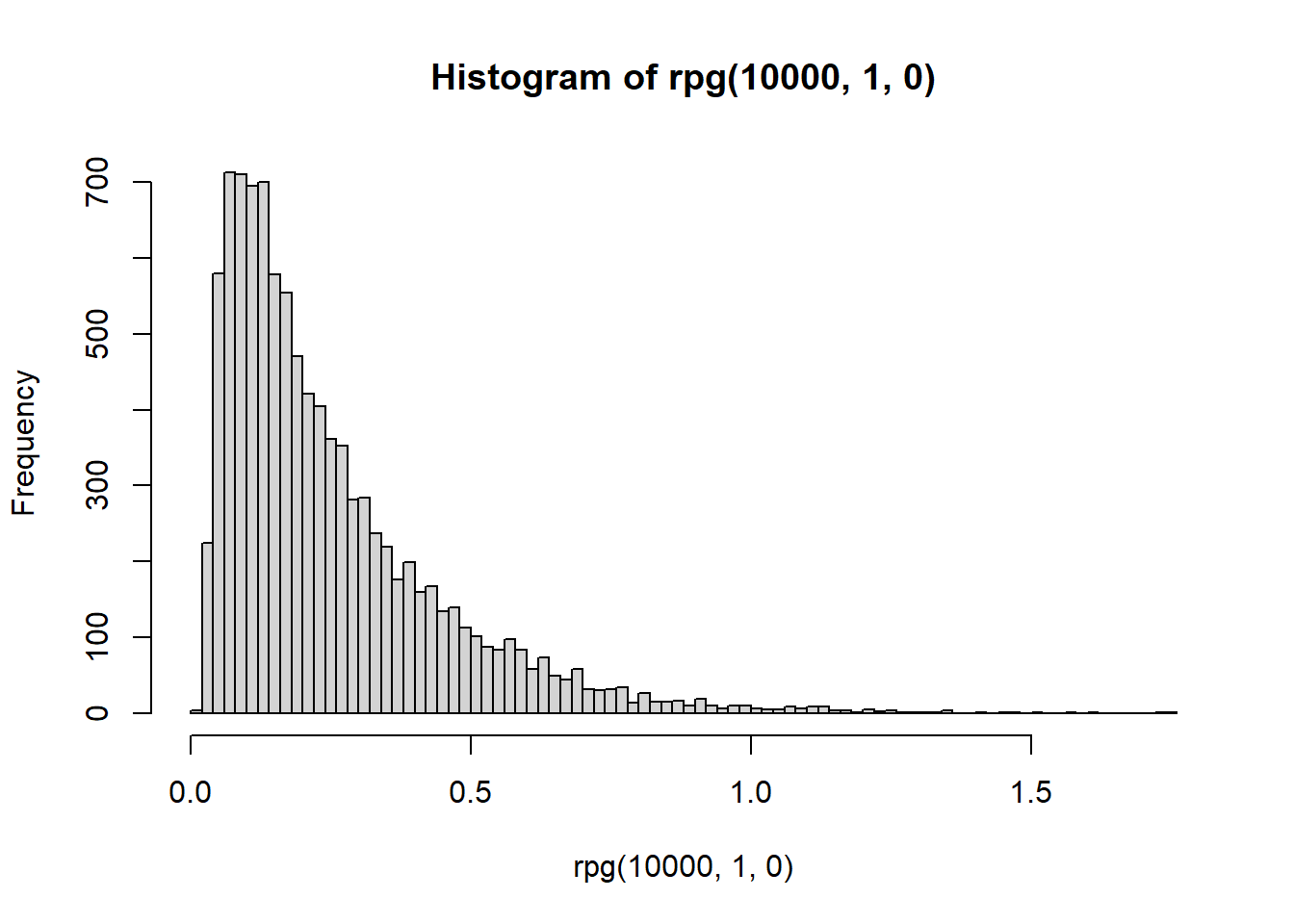

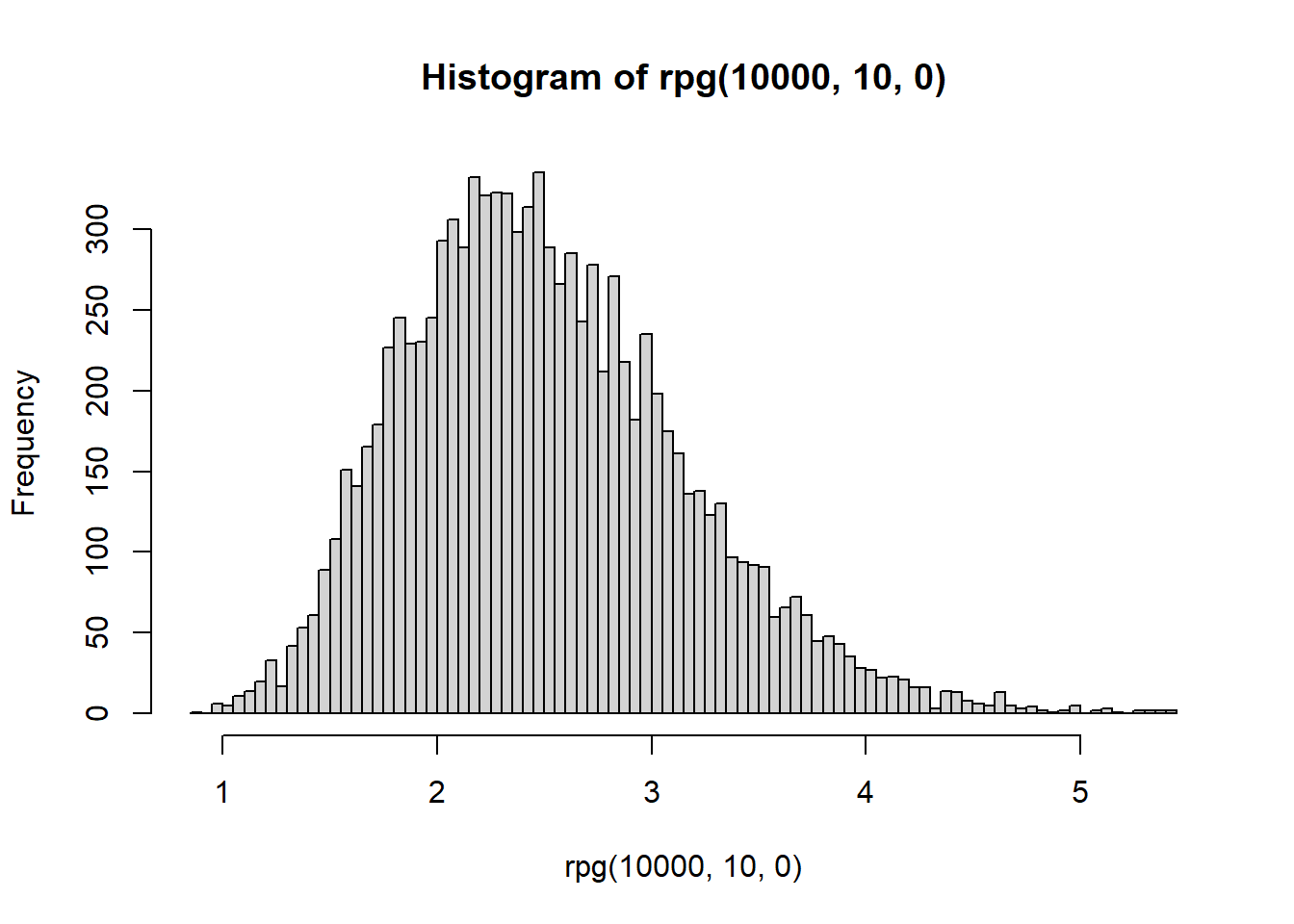

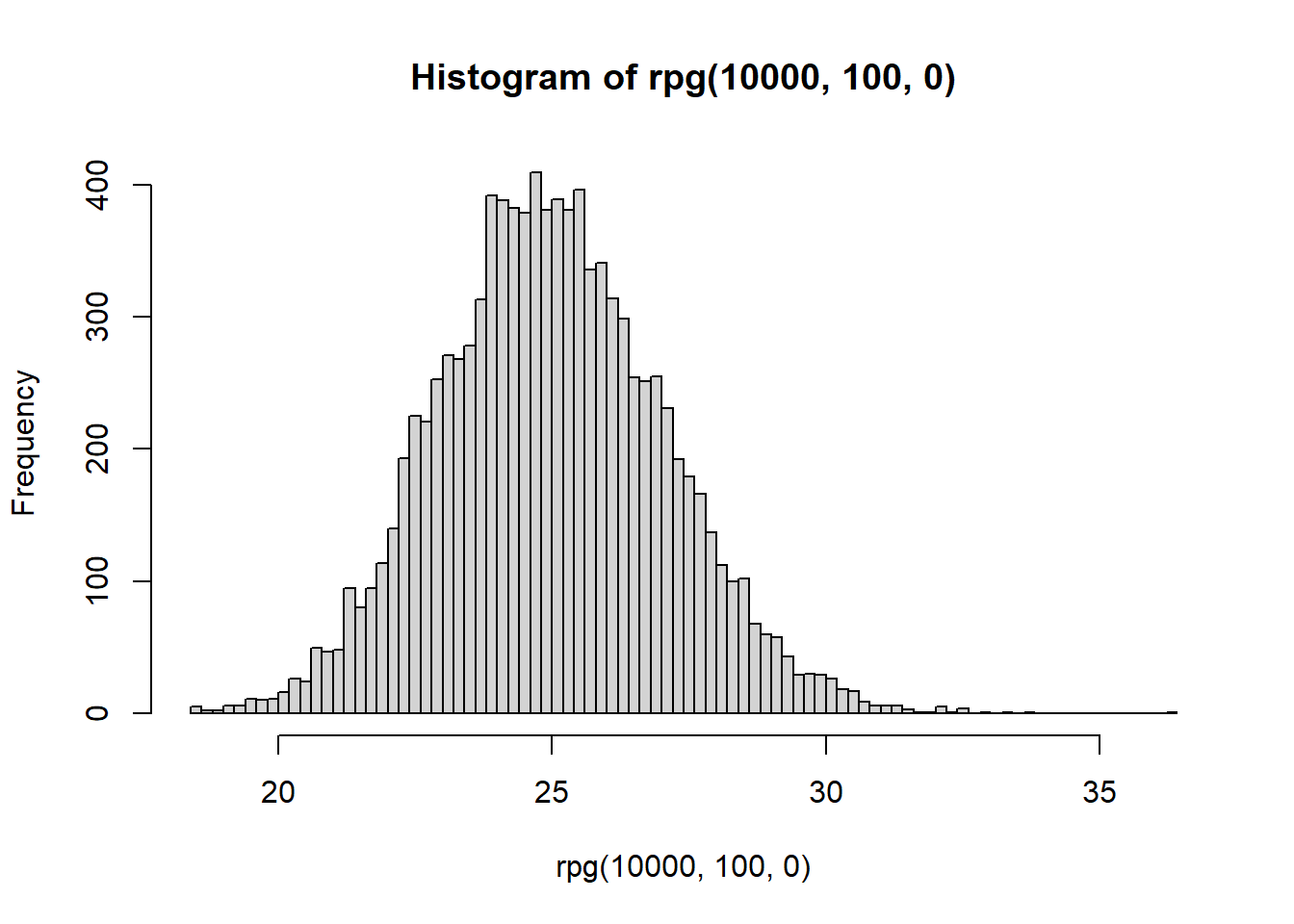

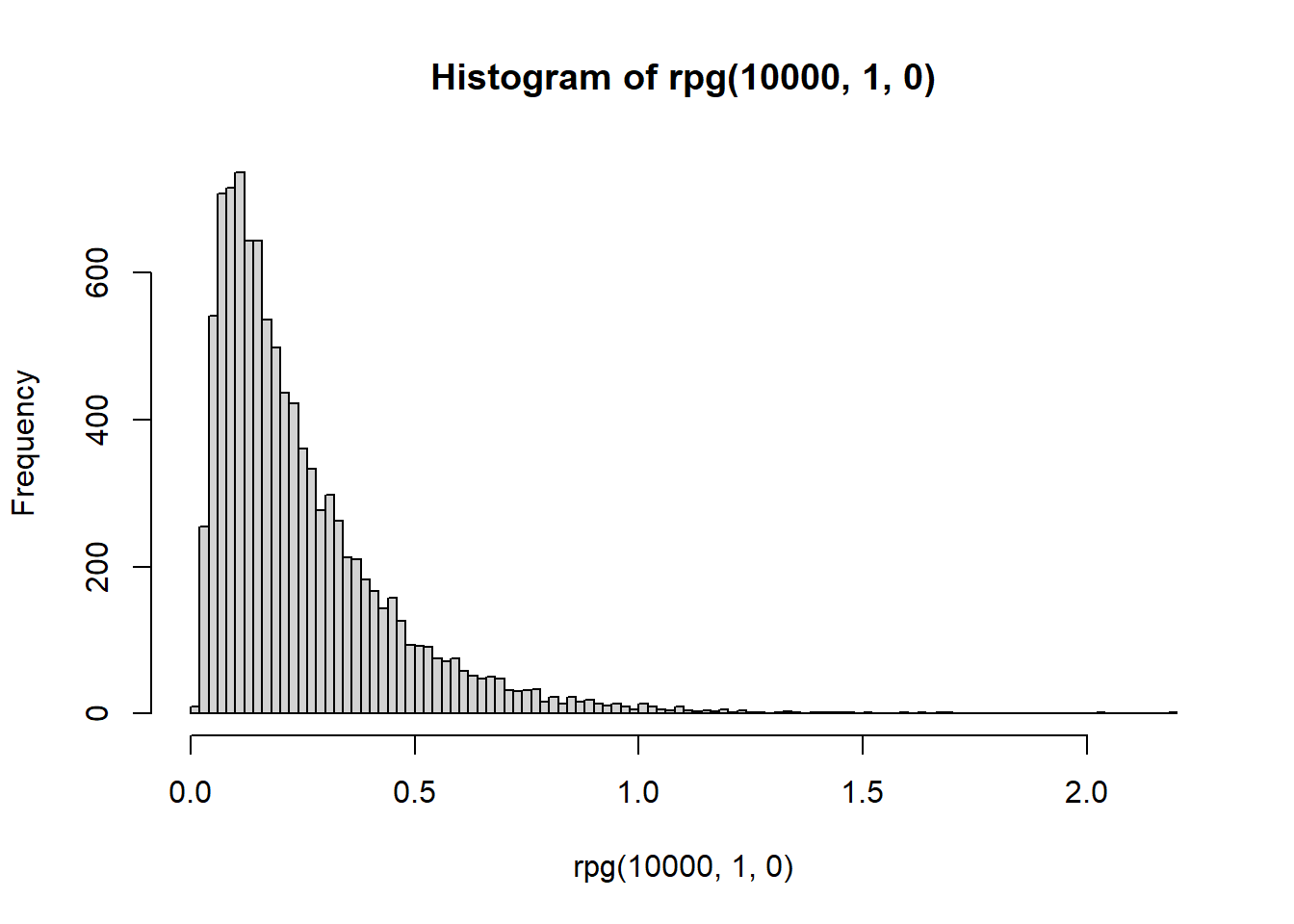

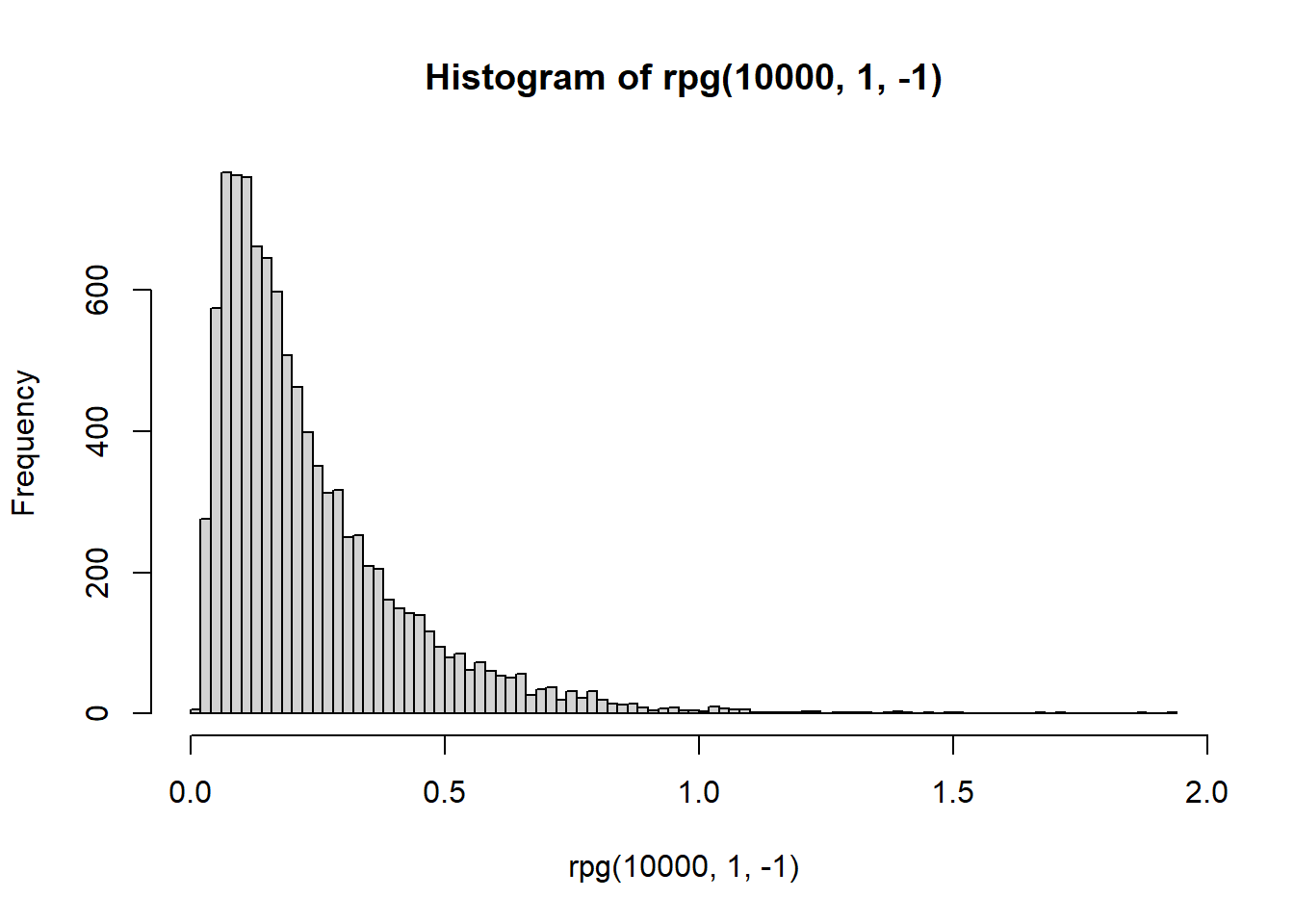

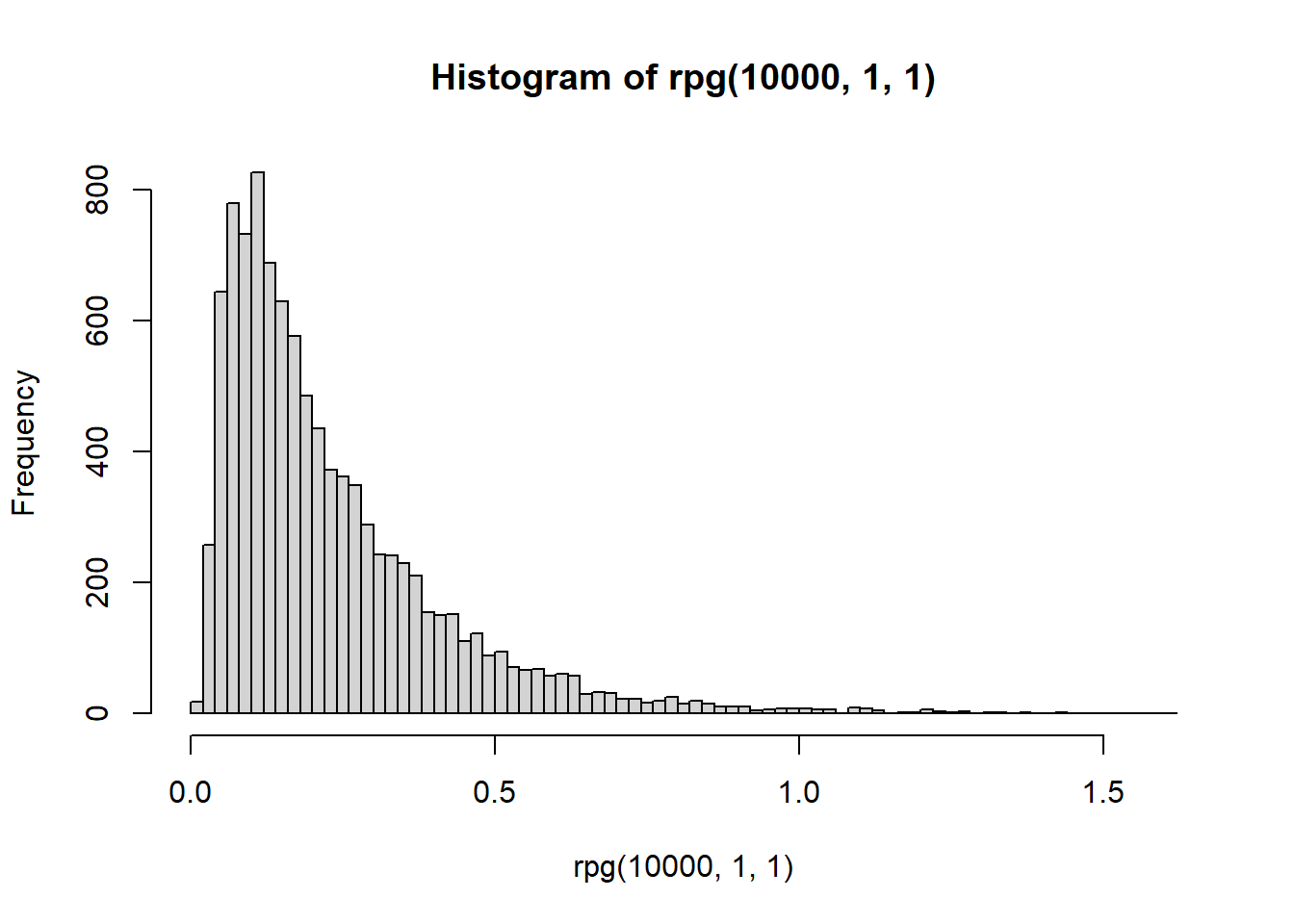

Histogram

library(BayesLogit)

hist(rpg(1e4,0.1,0),breaks = 100)

hist(rpg(1e4,1,0),breaks = 100)

hist(rpg(1e4,10,0),breaks = 100)

hist(rpg(1e4,100,0),breaks = 100)

hist(rpg(1e4,1,0),breaks = 100)

hist(rpg(1e4,1,-1),breaks = 100)

hist(rpg(1e4,1,1),breaks = 100)

hist(rpg(1e4,1,100),breaks = 100)

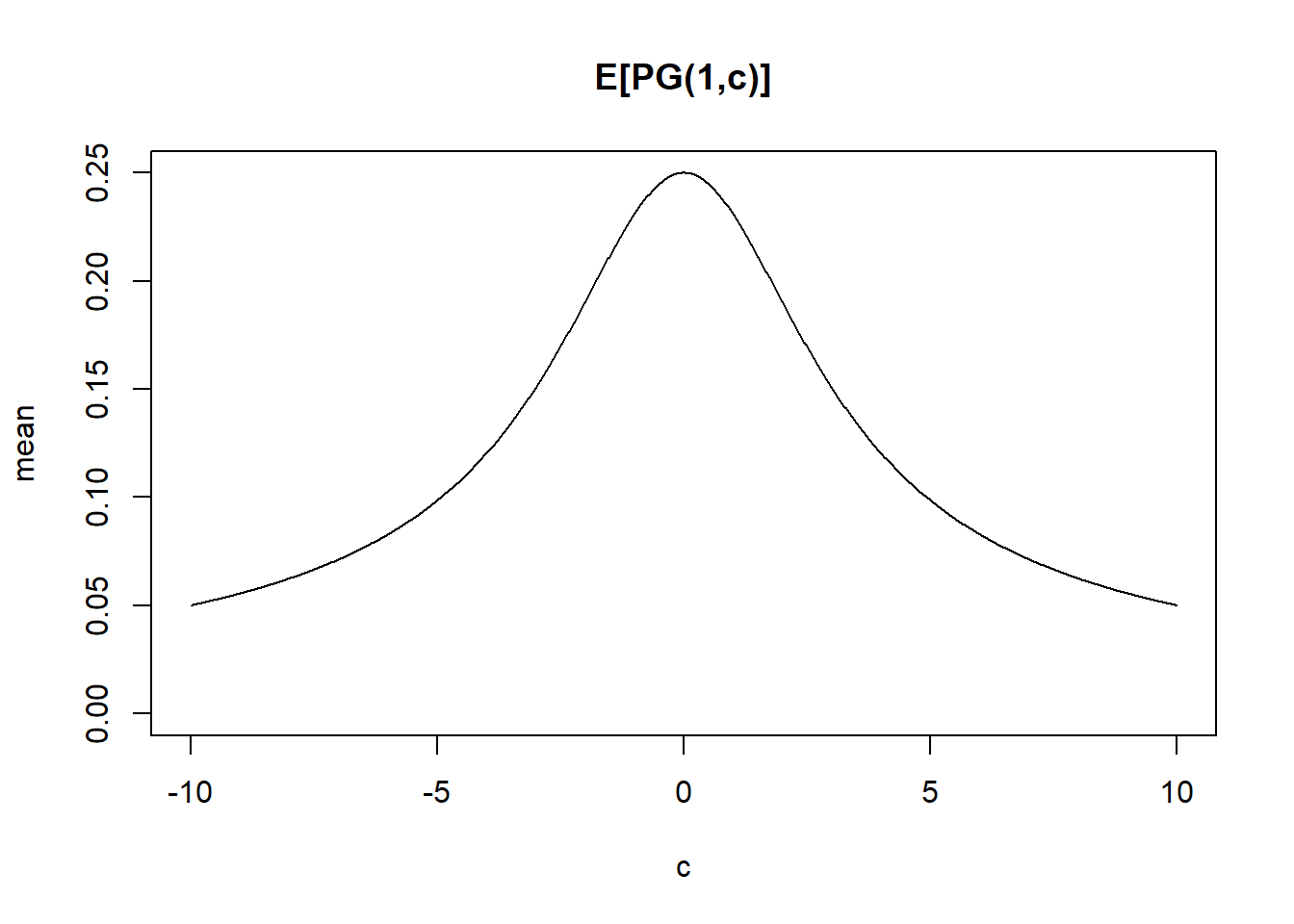

Expectation

pg_mean = function(b,c){b/(2*c)*tanh(c/2)}

cc = seq(-10,10,length=1000)

plot(cc,pg_mean(1,cc),type="l",ylim=c(0,0.25), main="E[PG(1,c)]",xlab='c',ylab='mean')

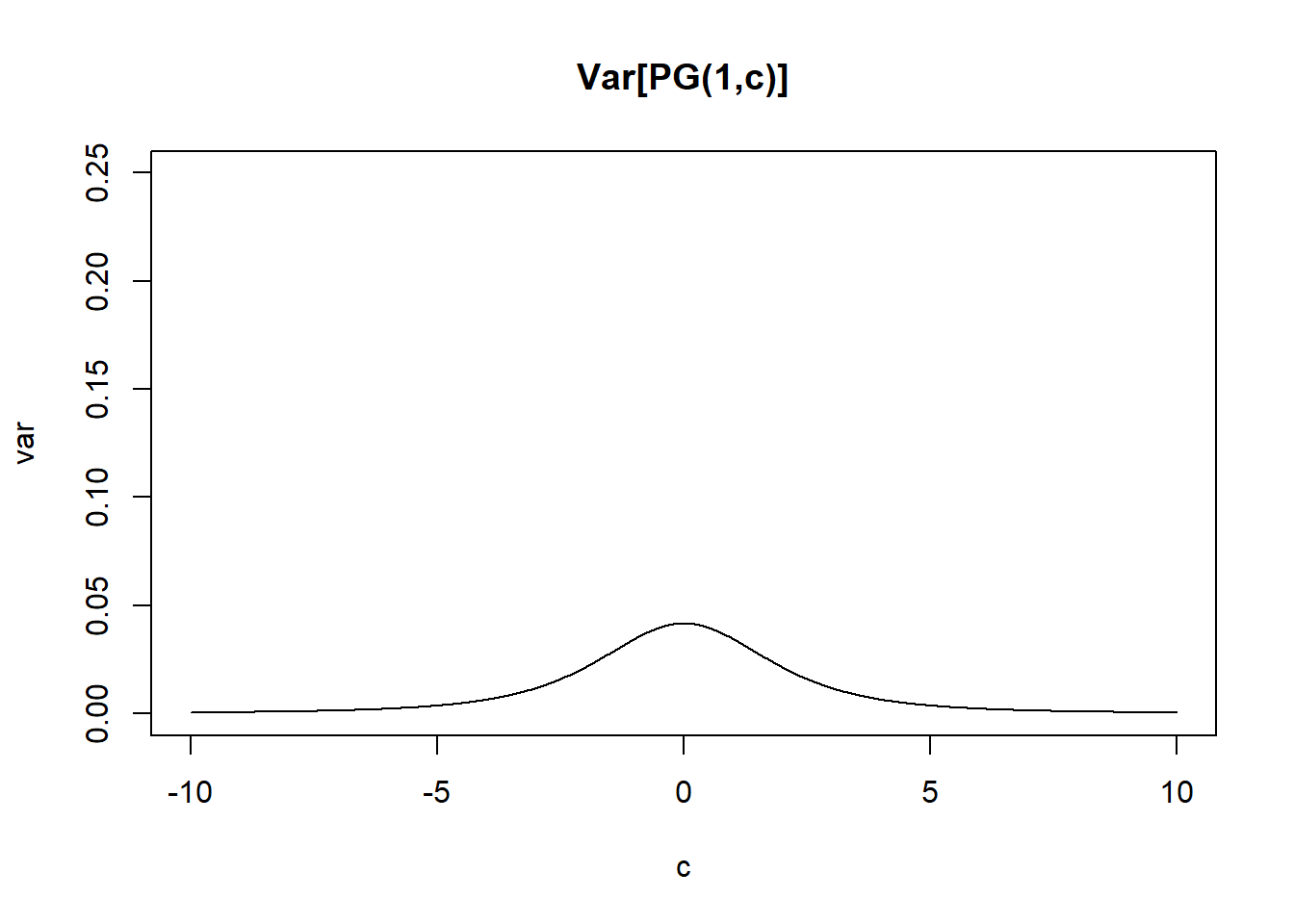

Variance

\[var(\omega) = \frac{b}{4c^3}(sinh(c)-c)sech^2(c/2)\]

pg_var = function(b,c){b/(4*c^3)*(sinh(c)-c)*(1/cosh(c/2))^2}

plot(cc,pg_var(1,cc),type="l",ylim=c(0,0.25), main="Var[PG(1,c)]",xlab='c',ylab='var')

Bayesian logistics regression

# install_github('jwindle/BayesLogit',INSTALL_opts = '--no-lock')

set.seed(12345)

N = 300;

P = 2;

##------------------------------------------------------------------------------

## Correlated predictors

rho = 0.5

Sig = matrix(rho, nrow=P, ncol=P); diag(Sig) = 1.0;

U = chol(Sig);

X = matrix(rnorm(N*P), nrow=N, ncol=P) %*% U;

##------------------------------------------------------------------------------

## Sparse predictors

X = matrix(rnorm(N*P, sd=1), nrow=N, ncol=P);

vX = as.numeric(X);

low = vX < quantile(vX, 0.5)

high = vX > quantile(vX, 0.5);

X[low] = 0;

X[!low] = 1;

beta = rnorm(P, mean=0, sd=1);

## beta = c(1.0, 0.4);

## X = matrix(rnorm(N*P), nrow=N, ncol=P);

psi = X %*% beta;

p = exp(psi) / (1 + exp(psi));

y = rbinom(N, 1, p);

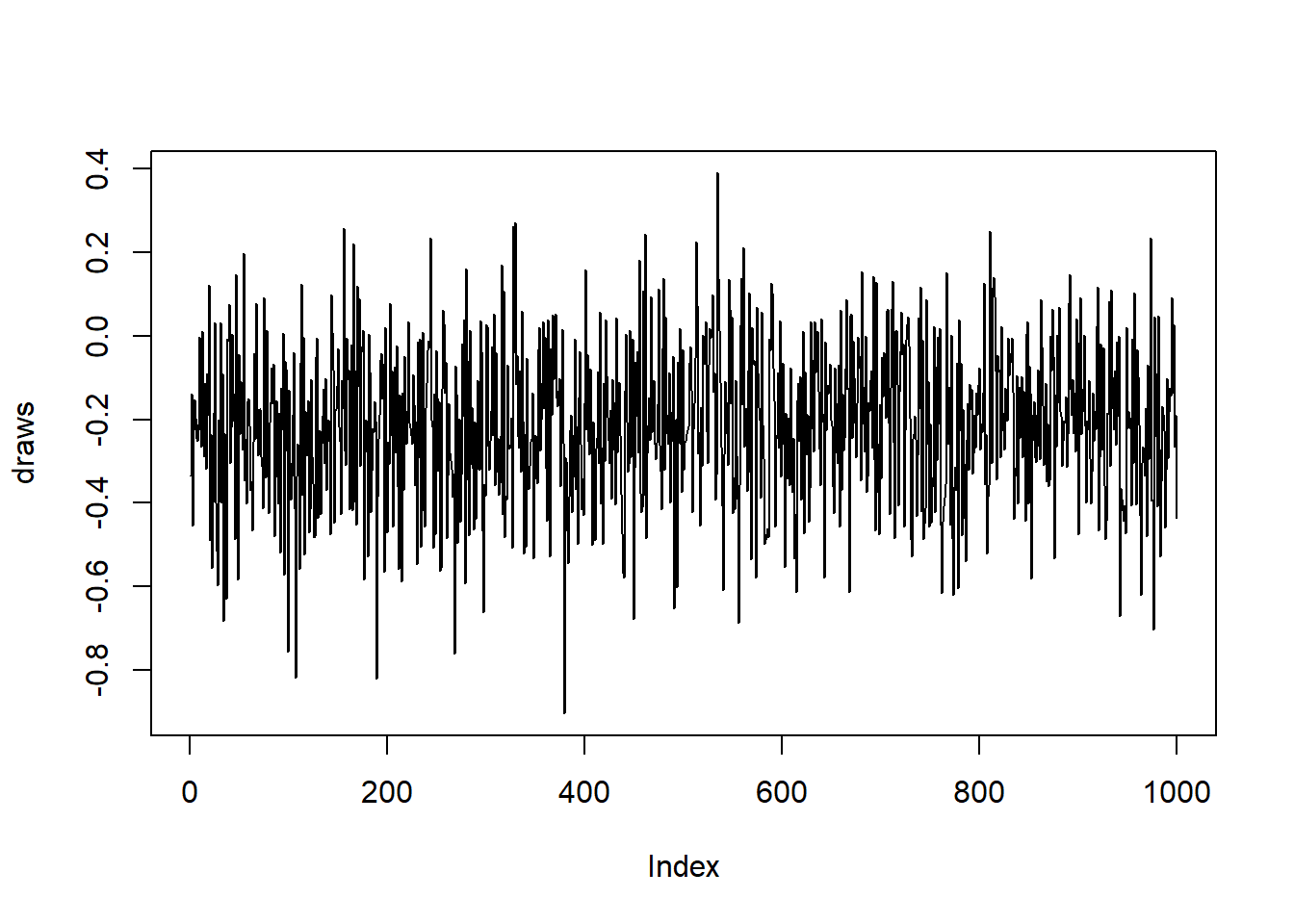

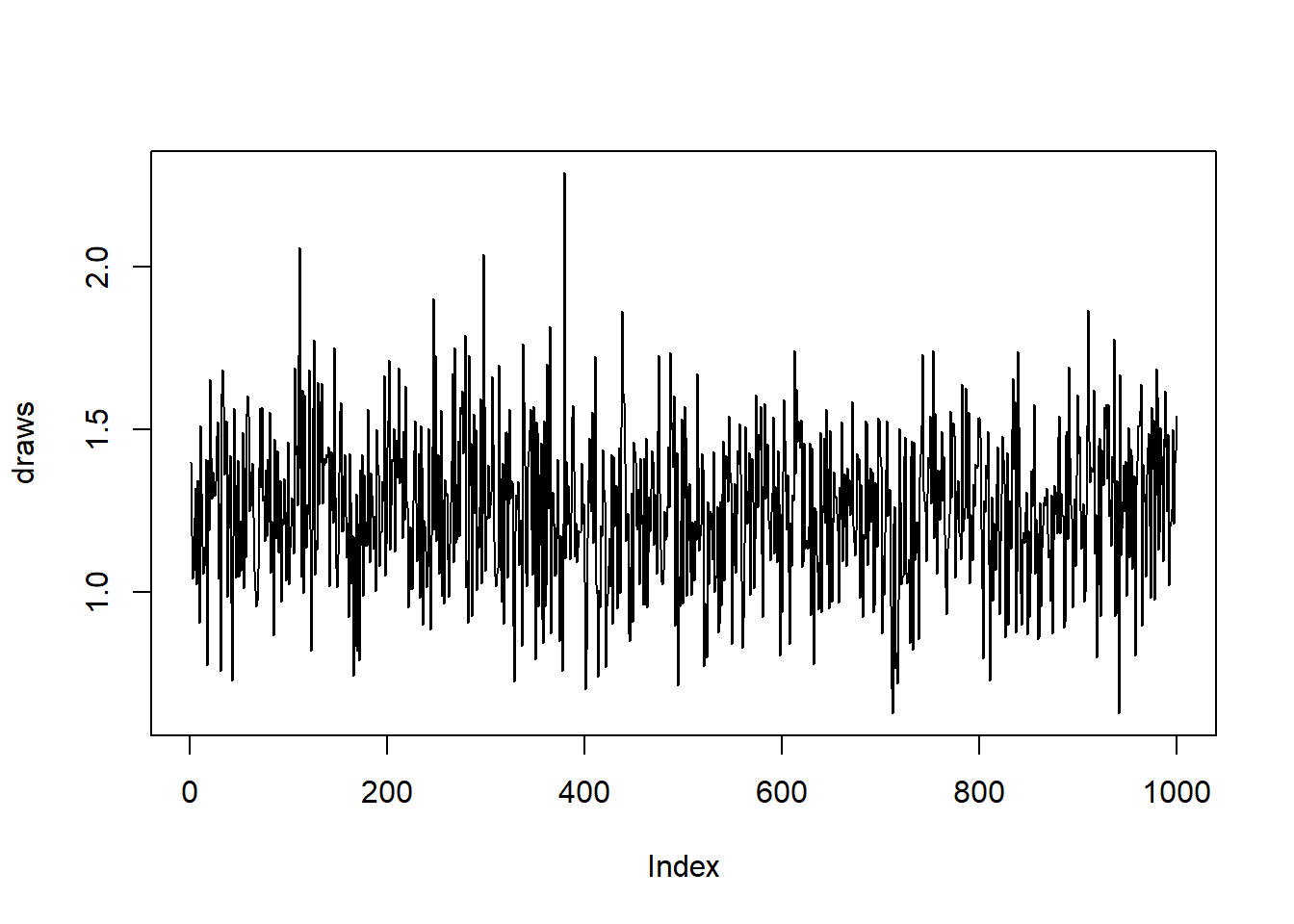

psw.fit = logit.R(y,X)[1] "LogitPG: Iteration 500"

[1] "LogitPG: Iteration 1000"

[1] "LogitPG: Iteration 1500"beta[1] -0.2678817 0.9372880apply(psw.fit$beta,2,mean)[1] -0.2117958 1.2579055plot(psw.fit$beta[,1],type='l',ylab='draws')

plot(psw.fit$beta[,2],type='l',ylab='draws')

source('code/logistic.R')

prior <- list(mu = rep(0,P), Sigma = diag(1,P))

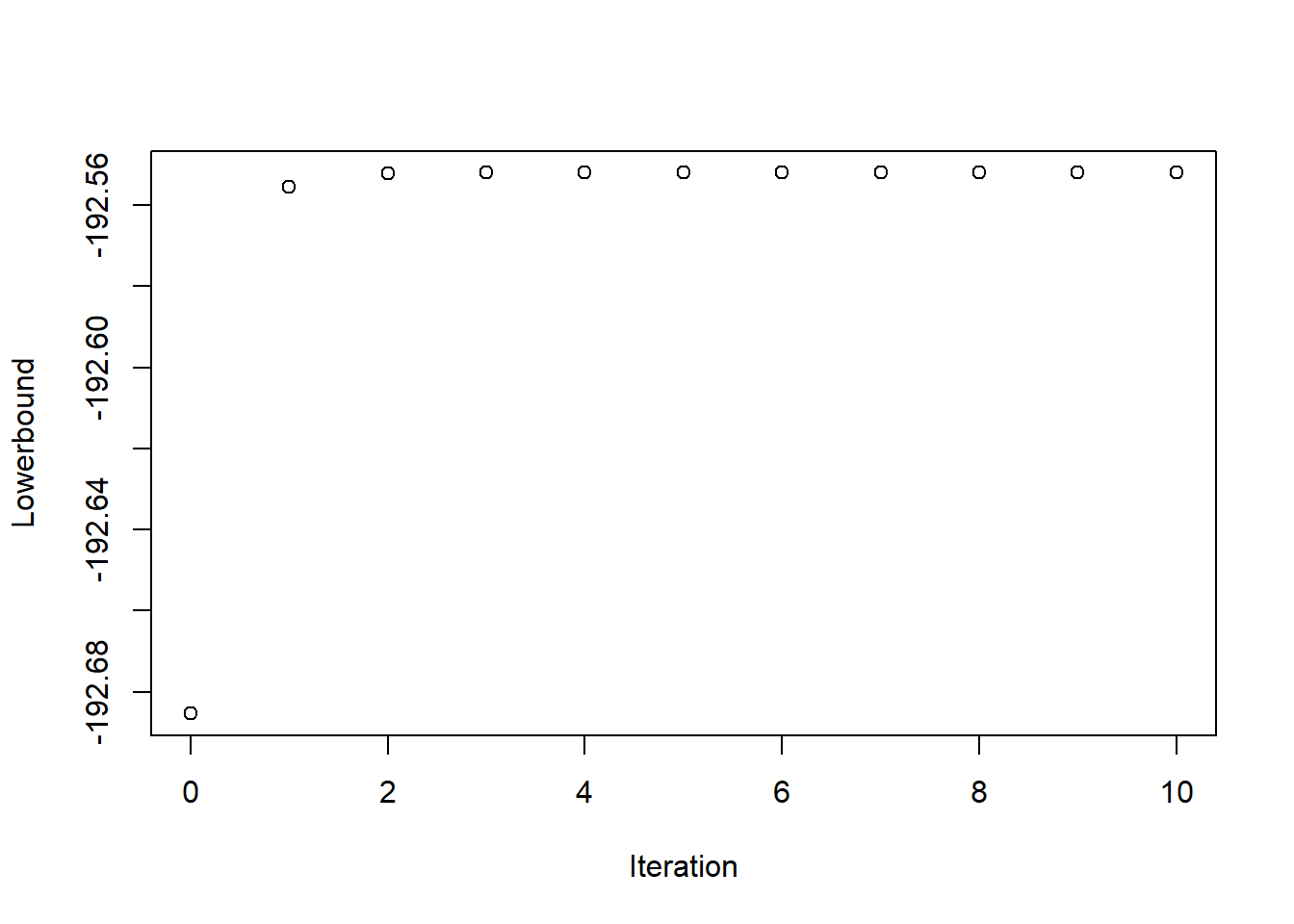

dr.fit = logit_CAVI(X,y,prior)

dr.fit$mu [,1]

[1,] -0.1738759

[2,] 1.1815105plot(dr.fit$Convergence)

Reference

Polson, N. G., Scott, J. G., & Windle, J. (2013). Bayesian inference for logistic models using Pólya–Gamma latent variables. Journal of the American statistical Association, 108(504), 1339-1349.

sessionInfo()R version 4.0.1 (2020-06-06)

Platform: x86_64-w64-mingw32/x64 (64-bit)

Running under: Windows 10 x64 (build 19041)

Matrix products: default

locale:

[1] LC_COLLATE=English_United States.1252

[2] LC_CTYPE=English_United States.1252

[3] LC_MONETARY=English_United States.1252

[4] LC_NUMERIC=C

[5] LC_TIME=English_United States.1252

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] BayesLogit_2.1.1 workflowr_1.6.2

loaded via a namespace (and not attached):

[1] Rcpp_1.0.4.6 rprojroot_1.3-2 digest_0.6.25 later_1.1.0.1

[5] R6_2.4.1 backports_1.1.7 git2r_0.27.1 magrittr_1.5

[9] evaluate_0.14 stringi_1.4.6 rlang_0.4.6 fs_1.4.1

[13] promises_1.1.0 whisker_0.4 rmarkdown_2.3 tools_4.0.1

[17] stringr_1.4.0 glue_1.4.1 httpuv_1.5.4 xfun_0.14

[21] yaml_2.2.1 compiler_4.0.1 htmltools_0.5.0 knitr_1.28